Sintrones has launched the EBOX-7000 edge AI GPU computing solution for factory automation and Industrial Internet of Things (IIoT) control systems in large-scale processes such as manufacturing and mining.

Sintrones has launched the EBOX-7000 edge AI GPU computing solution for factory automation and Industrial Internet of Things (IIoT) control systems in large-scale processes such as manufacturing and mining.

Canon Medical Systems will use NVIDIA DGX systems to process large volumes of medical data generated by Abierto VNA, its proprietary, in-house, medical data management system launched in January.

Canon Medical Systems will use NVIDIA DGX systems to process large volumes of medical data generated by Abierto VNA, its proprietary, in-house, medical data management system launched in January.

Monash University is taking research to another level with the launch of M3, the third-generation supercomputer available through the MASSIVE (Multi-modal Australian ScienceS Imaging and Visualisation Environment) facility.

Powered by ultra-high-performance NVIDIA Tesla K80 GPU accelerators, M3 will provide new simulation and real-time data processing capabilities to a wide selection of Australian researchers.

“Our collaboration with NVIDIA will take Monash research to new heights. By coupling some of Australia’s best researchers with NVIDIA’s accelerated computing technology we’re going to see some incredible impact. Our scientists will produce code that runs faster, but more significantly, their focus on deep learning algorithms will produce outcomes that are smarter,” said Professor Ian Smith, Vice Provost (Research and Research Infrastructure), Monash University.

Accelerated systems, or GPU-powered systems, for the first time accounted for more than 100 on the list of the world’s 500 most powerful supercomputers. That’s a total of 143 petaflops, over one-third of the list’s total FLOPS.

Accelerated systems, or GPU-powered systems, for the first time accounted for more than 100 on the list of the world’s 500 most powerful supercomputers. That’s a total of 143 petaflops, over one-third of the list’s total FLOPS.

NVIDIA Tesla GPU-based supercomputers comprise 70 of these systems – including 23 of the 24 new systems on the list – reflecting compound annual growth of nearly 50 percent over the past five years.

There are three primary reasons accelerators are becoming increasingly adopted for high performance computing.

Virtualisation just got a little turbo charge with the introduction of NVIDIA GPU-enabled professional graphics applications and accelerated computing capabilities to the Microsoft Azure cloud platform.

Microsoft is the first to leverage NVIDIA GRID 2.0 virtualised graphics for its enterprise customers.

Businesses will have a range of graphics prowess — depending on their needs. They can deploy NVIDIA Quadro-grade professional graphics applications and accelerated computing on-premises, in the cloud through Azure, or via a hybrid of the two using both Windows and Linux virtual machines.

At GTC South Asia, Monash University shared how it has leveraged GPU technology to transform the way research is done. Entelechy Asia catches up with the university’s Professor Paul Bonnington (Professor and Director of E-research […]

NVIDIA has established the NVIDIA Technology Centre Asia Pacific, the first of its kind in the region to focus on deep learning research and development (R&D).

Located at Nanyang Technological University in Singapore, the centre will leverage the power of NVIDIA’s graphics processing unit (GPU) platforms to develop innovative solutions for both the private and public sectors.

NVIDIA will invest more than S$20 million (US$14.2M) in the centre over a three-year period. This will include the deployment of a broad range of NVIDIA technologies — from the NVIDIA DRIVE PX platform for automated driver assistance systems and self-piloted vehicles, to the NVIDIA Tesla platform, which powers deep learning on the world’s top 5 public clouds as well as some of the world’s fastest supercomputers.

NVIDIA has posted sterling results in Q2, driven by strong growth in gaming, datacentre and cloud, and mobile. Q2 revenue hit US$1.10 billion, up 13 percent from $977 million a year earlier. Revenue for the first […]

IBM and NVIDIA plan to collaborate on GPU-accelerated versions of IBM’s wide portfolio of enterprise software applications — taking GPU accelerator technology for the first time into the heart of enterprise-scale data centres.

IBM and NVIDIA plan to collaborate on GPU-accelerated versions of IBM’s wide portfolio of enterprise software applications — taking GPU accelerator technology for the first time into the heart of enterprise-scale data centres.

The collaboration aims to enable IBM customers to more rapidly process, secure and analyse massive volumes of streaming data.

“Harnessing GPU technology to IBM’s enterprise software platforms will bring advanced, in-memory processing to a wider variety of new application areas,” said Sean Poulley, Vice President of Databases and Data Warehousing at IBM. “We are looking at a new generation of higher-performance solutions to help data center customers overcome their most challenging computing problems.”

NVIDIA has launched the NVIDIA GeoInt Accelerator, the world’s first GPU-accelerated geospatial intelligence platform to enable security analysts to find actionable insights quicker and more accurately than ever before from vast quantities of raw data, images and video.

NVIDIA has launched the NVIDIA GeoInt Accelerator, the world’s first GPU-accelerated geospatial intelligence platform to enable security analysts to find actionable insights quicker and more accurately than ever before from vast quantities of raw data, images and video.

The platform provides defence and homeland security analysts with tools that enable faster processing of high-resolution satellite imagery, facial recognition in surveillance video, combat mission planning using geographic information system (GIS) data, and object recognition in video collected by drones.

It offers a complete solution consisting of an NVIDIA Tesla GPU accelerated system, software applications for geospatial intelligence analysis, and advanced application development libraries.

By Edward Lim, Managing Consultant, CIZA Concept

By Edward Lim, Managing Consultant, CIZA Concept

Established in 2006 as a research institute at the National University of Singapore (NUS), the NUS Risk Management Institute (RMI) is dedicated to financial risk management. Its establishment was supported by the Monetary Authority of Singapore (MAS) under its program on Risk Management and Financial Innovation.

In 2009, RMI embarked on a non-profit Credit Research Initiative (CRI) in response to the financial crisis, with the intent to spur research and development in the critical area of credit rating. Besides being just a typical research project, it wanted to demonstrate the operational feasibility of its research and become a trusted source of credit information.

CRI currently covers more than 35,000 companies in 106 economies in Asia-Pacific, North America, Europe, Latin America, Africa, and the Middle East.

NVIDIA Tesla GPU accelerators are powering the world’s two most energy efficient supercomputers, according to the latest Green500 list published last week.

NVIDIA Tesla GPU accelerators are powering the world’s two most energy efficient supercomputers, according to the latest Green500 list published last week.

The winning system is Eurora at CINECA, Italy’s largest supercomputing centre, in Casalecchio di Reno. Equipped with NVIDIA Kepler architecture-based GPU accelerators – the highest-performance, most efficient accelerators ever built – Eurora delivers 3,210 MFlops per watt, making it 2.6 times more energy efficient than the best system using Intel CPUs alone (at Météo France). It also greatly surpasses the most efficient Intel Xeon Phi accelerator-based system, Beacon, at the National Institute for Computational Sciences, at the University of Tennessee.

The number two system on the June 2013 Green500 list is the Aurora Tigon supercomputer at the Selex ES facilities Chieti, Italy.

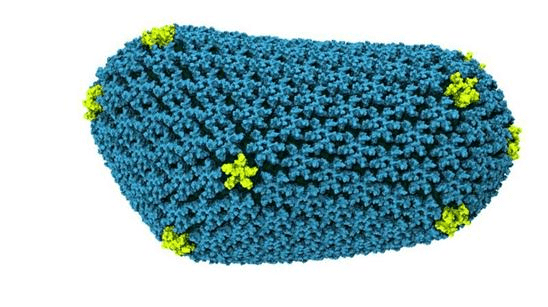

Researchers at the University of Illinois at Urbana-Champaign (UIUC) have achieved a major breakthrough in the battle to fight the spread of the human immunodeficiency virus (HIV) using NVIDIA Tesla GPU accelerators.

Researchers at the University of Illinois at Urbana-Champaign (UIUC) have achieved a major breakthrough in the battle to fight the spread of the human immunodeficiency virus (HIV) using NVIDIA Tesla GPU accelerators.

Featured on the cover of the latest issue of Nature, the world’s most-cited interdisciplinary science journal, a new paper details how UIUC researchers collaborating with researchers at the University of Pittsburgh School of Medicine have, for the first time, determined the precise chemical structure of the HIV “capsid,” a protein shell that protects the virus’s genetic material and is a key to its virulence. Understanding this structure may hold the key to the development of new and more effective antiretroviral drugs to combat a virus that has killed an estimated 25 million people and infected 34 million more.

UIUC researchers uncovered detail about the capsid structure by running the first all-atom simulation of HIV on the Blue Waters Supercomputer. Powered by 3,000 NVIDIA Tesla K20X GPU accelerators – the highest performance, most efficient accelerators ever built – the Cray XK7 supercomputer gave researchers the computational performance to run the largest simulation ever published, involving 64 million atoms.

Italy’s “Eurora” supercomputer has set a new record for data centre energy efficiency. Based on NVIDIA Tesla GPU accelerators, it is built by Eurotech and deployed at the Cineca facility in Bologna. It is Italy’s most powerful supercomputing centre, reaching 3,150 megaflops per watt of sustained performance – a mark 26 percent higher than the top system on the most recent Green500 list of the world’s most efficient supercomputers.

Italy’s “Eurora” supercomputer has set a new record for data centre energy efficiency. Based on NVIDIA Tesla GPU accelerators, it is built by Eurotech and deployed at the Cineca facility in Bologna. It is Italy’s most powerful supercomputing centre, reaching 3,150 megaflops per watt of sustained performance – a mark 26 percent higher than the top system on the most recent Green500 list of the world’s most efficient supercomputers.

Eurora achieved the record-breaking achievement by combining 128 high-performance, energy-efficient NVIDIA Tesla K20 accelerators with the Eurotech Aurora Tigon supercomputer, featuring innovative Aurora Hot Water Cooling technology, which uses direct hot water cooling on all electronic and electrical components of the HPC system.

Available to members of the Partnership for Advanced Computing in Europe (PRACE) and major Italian research entities, Eurora will enable scientists to advance research and discovery across a range of scientific disciplines, including material science, astrophysics, life sciences, and Earth sciences.