Eviden and AMD have been chosen to build Alice Recoque, France’s first exascale supercomputer and Europe’s second. Designed to support high-performance computing (HPC) and AI, Alice Recoque is named after Alice Arnaud Recoque, a pioneering […]

Eviden and AMD have been chosen to build Alice Recoque, France’s first exascale supercomputer and Europe’s second. Designed to support high-performance computing (HPC) and AI, Alice Recoque is named after Alice Arnaud Recoque, a pioneering […]

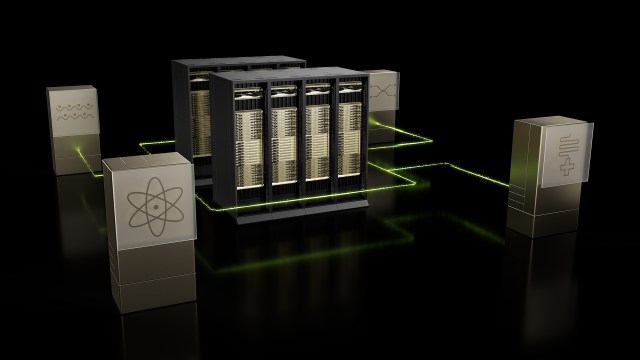

NVIDIA is teaming up with Japan’s leading national research institute Riken to deploy two next-generation supercomputers designed specifically for AI-driven scientific discovery and quantum computing research. Announced at the Supercomputing 25 conference in St Louis, […]

HPE has revealed its next-generation Cray supercomputing portfolio that comes complete with new compute blades, unified management software, and high-performance interconnects to boost AI productivity and scientific discoveries. This launch is particularly significant for fast-developing […]

NVIDIA has launched NVQLink, an open system architecture that tightly couples quantum processors with high-performance GPU supercomputers. NVQLink enables hybrid quantum-classical systems that significantly enhance quantum error correction and control algorithms. By providing a low-latency, […]

Hewlett Packard Enterprise (HPE), AMD and Oak Ridge National Laboratory (ORNL) have joined forces to launch the Discovery exascale supercomputer and the Lux AI cluster. Discovery deepens AMD and HPE’s partnership with the Department of […]

NVIDIA has launched the DGX Spark, the world’s smallest AI supercomputer, to bring petaflop-level performance to developers. The compact desktop enables local AI development by supporting inference on models with up to 200 billion parameters […]

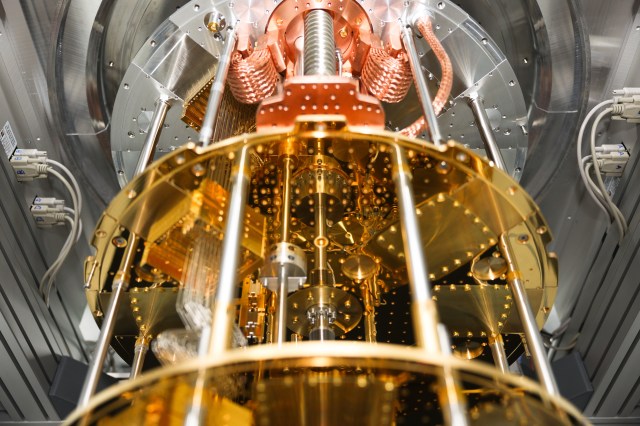

Finnish quantum computing firm IQM is integrating its 20-qubit IQM Radiance quantum computer into Oak Ridge National Laboratory (ORNL) in Tennessee, USA. This marks ORNL’s first incorporation of an on-premises quantum computer directly into its […]

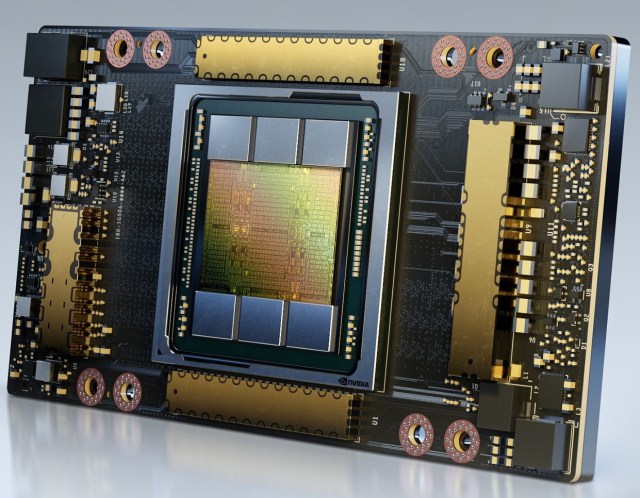

La Trobe University has become the first Australian university to commission the NVIDIA DGX H200 supercomputer. Hosted at NEXTDC’s M2 data centre in Melbourne, the new system is set to accelerate breakthroughs in immunotherapies, cancer […]

NVIDIA plans to manufacture its AI supercomputers entirely within the United States for the first time, marking a significant shift in the global technology supply chain. In partnership with leading manufacturers such as TSMC, Foxconn, […]

NVIDIA plans to build the NVIDIA Accelerated Quantum Research Center (NVAQC) in Boston to advance quantum computing by integrating leading quantum hardware with AI supercomputers. NVAQC will enable accelerated quantum supercomputing, tackling some of quantum […]

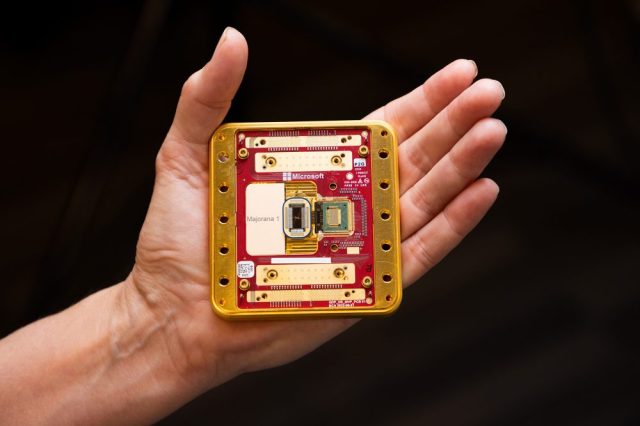

Microsoft has introduced the Majorana 1 quantum chip that is expected to bring quantum computers capable of solving complex industrial-scale problems within years rather than decades. Powered by a new Topological Core architecture, the processor […]

Cyberport has opened the Artificial Intelligence Supercomputing Centre (AISC), the first of its kind in Hong Kong, which officially commenced operations on December 9, 2024. The centre’s launch, accompanied by the opening of an AI […]

NVIDIA and Google Quantum AI are pushing the boundaries of quantum computing by leveraging the NVIDIA CUDA-Q platform to accelerate the design of next-generation quantum devices. Google Quantum AI is harnessing the power of NVIDIA’s […]

Singapore’s National Supercomputing Centre (NSCC) has launched the Aspire 2A+ supercomputer to advance artificial intelligence (AI) research in Singapore. Powered by NVIDIA’s DGX SuperPOD technology, the system boosts Singapore’s computational arsenal as well as represents […]

Foxconn and NVIDIA have joined forces to build the island’s fastest and most powerful supercomputer, positioning Taiwan as a formidable contender in the global AI race. Revealed at Hon Hai Tech Day, the Hon Hai […]

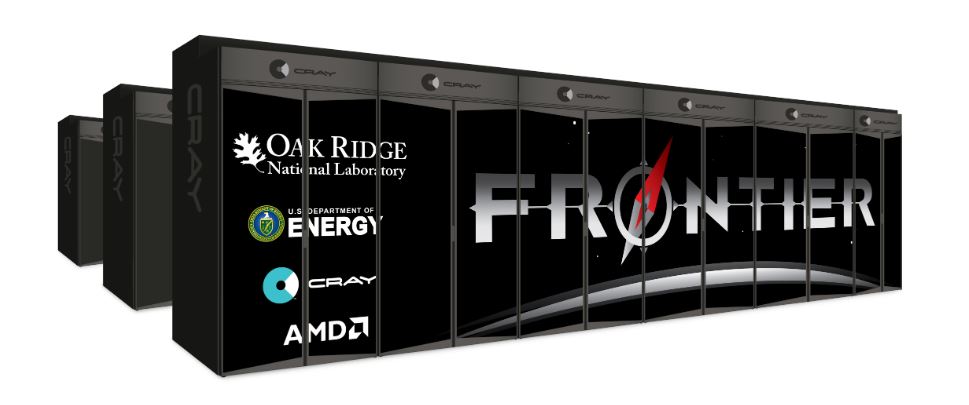

The Frontier supercomputer at Oak Ridge National Lab remains the world’s fastest supercomputer for the third consecutive year. Powered by AMD EPYC CPUs and AMD Instinct GPUs, the supercomputer achieved a staggering High-Performance Linpack (HPL) […]

The Miyabi supercomputer in Japan is using NVIDIA Grace Hopper superchips to power its advanced high-performance computing (HPC) initiatives. Jointly by the Center for Computational Sciences at the University of Tsukuba and the Information Technology […]

Japan’s new ABCI-Q supercomputer will be powered by NVIDIA platforms for accelerated and quantum computing. Designed to advance the nation’s quantum computing initiative, ABCI-Q will enable high-fidelity quantum simulations for research across industries. The supercomputer […]

By KL Lim Australia’s Pawsey Supercomputing Research Centre will be integrating the NVIDIA CUDA Quantum platform into its National Supercomputing and Quantum Computing Innovation Hub. This move signifies a significant leap forward in the pursuit […]

NVIDIA has unveiled its Eos data-centre-scale supercomputer, which has clinched the No. 9 spot in the TOP500 list of the world’s fastest supercomputers. Named after the Greek goddess believed to open the gates of dawn […]

Singapore’s first supercomputer for the healthcare sector is set to improve patient care and resource allocation. Fully operational since July 31, the Prescience supercomputer lets National University Health System (NUHS) staff use AI to estimate […]

NVIDIA, Rolls-Royce and Classiq have achieved a quantum computing breakthrough aimed at improving the efficiency of jet engines. Using NVIDIA’s quantum computing platform, the companies have designed and simulated the world’s largest quantum computing circuit […]

Mitsui is collaborating with NVIDIA on the Tokyo-1 generative AI supercomputer to supercharge Japan’s US$100 billion pharma industry. Tokyo-1 features an NVIDIA DGX AI supercomputer that will be accessible to Japan’s pharma companies and startups. […]

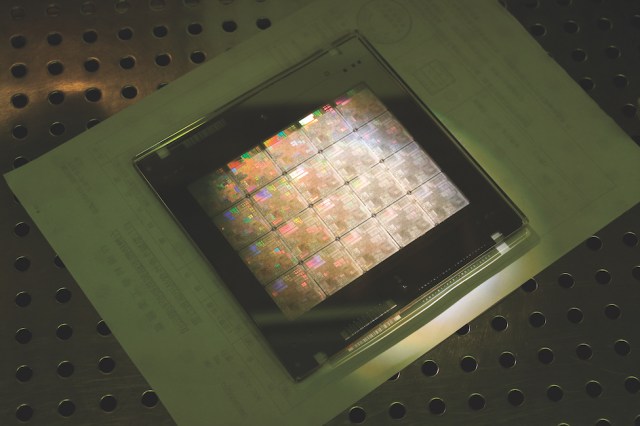

A breakthrough in computational lithography will enable chipmakers to accelerate the design and manufacturing of next-generation chips. Lithography is the process of creating patterns on a silicon wafer. The new NVIDIA cuLitho software library for […]

Taiwan’s National Tsing Hua University NTHU-CM team has emerged as champion and won the best big data analytics performance at the APAC HPC-AI student competition. Southern University of Science and Technology and National Tsing Hua […]

Hewlett Packard Enterprise (HPE) has expanded its supercomputer offerings to make supercomputing accessible to more enterprises. The new supercomputers are the HPE Cray EX2500, XD2000 and XD6500. Based on the same architecture as the HPE […]

Baidu has introduced Qian Shi (乾始), its first superconducting quantum computer that fully integrates hardware, software and applications. Located at Baidu’s Quantum Computing Hardware Lab in Beijing, Qian Shi incorporates its hardware platform with Baidu’s […]

Quantum research and development (R&D) has received a boost with the announcement of the NVIDIA Quantum Optimized Device Architecture (QODA). The unified computing platform aims to make quantum computing more accessible with a coherent hybrid […]

Seventeen months after NVIDIA announced its intention to acquire Arm, the deal has been called off. Rumours of the end of the agreement were swirling this morning with the media working on overdrive to confirm […]

Meta has just taken AI up another notch with its newly-designed and built AI Research SuperCluster (RSC) which is believed to be among the fastest AI supercomputers running today with 760 NVIDIA DGX A100 systems […]

NVIDIA has acquired Bright Computing which develops software for managing high performance computing (HPC) systems. Founded in 2009 and headquartered in Amsterdam, Bright Computing is used by more than 700 organisations worldwide, including Boeing, Johns […]

Thailand’s National Science and Technology Development Agency (NSTDA) is serious about its mission to accelerate science, technology and innovation development in the country. It is deploying a whopping 704 NVIDIA A100 Tensor Core GPUs for […]

Earth-2 was first mentioned by NVIDIA CEO Jensen Huang at the close of his GPU Technology keynote address earlier this week. It’s obviously a topic that’s close to his heart because he has written a […]

Taiwan’s National Tsing Hua University (NTHU) has done it again — winning the ASC Student Supercomputer Challenge for the second year running. It outperformed more than 300 teams from prestigious universities around the world to […]

The numbers are insane. 1 powerful AI supercomputer = 4 exaflops of AI performance.driven by 6,159 NVIDIA A100 Tensor Core GPUs. That’s the Perlmutter, the new fastest AI supercomputer named after astrophysicist Saul Permutter. It […]

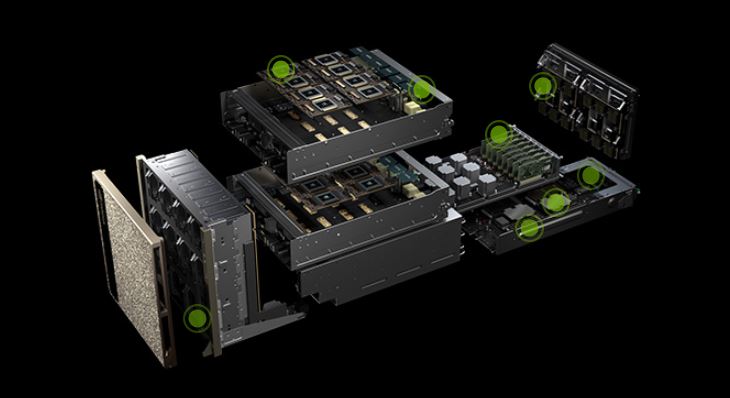

At GTC21, NVIDIA unveiled the next-generation NVIDIA DGX SuperPOD, the world’s first cloud-native, multi-tenant AI supercomputer. It features BlueField-2 data processing units that offload, accelerate and isolate users’ data to provide users with secure connections […]

Under the Biden Administraion, the US Department of Commerce is continuing on its trend of blacklisting China companies deemed to be linked to or supporting China’s military. The latest strike involves seven supercomputing — Shanghai […]

NVIDIA and a consortium of Thai universities have signed a memorandum of understanding (MoU) on driving research and accelerating scientific breakthroughs in artificial intelligence (AI) and high performance computing (HPC). The partnership will help spur […]

Chengdu plans to make a strong push to advance next generation artificial intelligence (AI). The capital of China’s Sichuan province is working on developing an AI-centreed pilot zone. More than 100 billion yuan (US$15.2 billion) […]

Eight out of the top 10 supercomputers in the world are powered by NVIDIA technology. In fact, the GPU giant is at the heart of nearly 70 percent of the world’s top 500 fastest supercomputers, […]

Japan’s National Institute of Advanced Industrial Science and Technology (AIST) has commissioned Fujitsu to build the “AI Bridging Green Cloud Infrastructure” (ABCI) supercomputer to accelerate AI research and development (R&D) in industry, government, and academia. […]

NVIDIA Founder Jensen Huang and VMware CEO Pat Gelsinger at VMworld 2020.

VMware and NVIDIA are teaming up to transform the enterprise data centre architecture and turn it into an end-to-end AI platform.

After weeks of speculation, it’s now official. NVIDIA will acquire Arm from SoftBank Group Corp (SBG) for US$40 billion.

A Super Micro Computer (Supermicro)-Preferred Networks (PFN) collaboration has achieved the top ranking for the Green500 semi-annual industry assessment.

The Fugaku supercomputer jointly developed by RIKEN and Fujitsu has been crowed the world’s fastest supercomputer in the Top500 list. This follows its achievement as the world’s most efficient supercomputer on the Green500 list in November 2019.

The new NVIDIA Mellanox UFM CyberAI platform minimises downtime in InfiniBand datacentres by harnessing AI-powered analytics to detect security threats and operational issues, as well as predict network failures.

COVID-19 is leaving a trail of cancelled events in its footpath. Two IT events scheduled this week in Singapore have been canned.

COVID-19 is leaving a trail of cancelled events in its footpath. Two IT events scheduled this week in Singapore have been canned.

NVIDIA has introduced the Jetson Xavier NX, which it dubbed as “the world’s smallest, most powerful AI supercomputer for robotic and embedded computing devices at the edge”.

NVIDIA has introduced the Jetson Xavier NX, which it dubbed as “the world’s smallest, most powerful AI supercomputer for robotic and embedded computing devices at the edge”.

NVIDIA has announced the NVIDIA EGX Edge Supercomputing Platform which lets organisations deliver next-generation AI, IoT and 5G-based services at scale and with low latency. Along with annoucing this at his keynote address at the opening of Mobile World Congress in Los Angeles, NVIDIA Founder and CEO Jen-Hsun Huang declared that we have entered a new era, where billions of always-on IoT sensors will be connected by 5G and processed by AI.

NVIDIA has announced the NVIDIA EGX Edge Supercomputing Platform which lets organisations deliver next-generation AI, IoT and 5G-based services at scale and with low latency. Along with annoucing this at his keynote address at the opening of Mobile World Congress in Los Angeles, NVIDIA Founder and CEO Jen-Hsun Huang declared that we have entered a new era, where billions of always-on IoT sensors will be connected by 5G and processed by AI.

Giving customers choices and enabling innovation in the high performance computing (HPC) space are the key reasons why NVIDIA is providing support for Arm CPUs.

Giving customers choices and enabling innovation in the high performance computing (HPC) space are the key reasons why NVIDIA is providing support for Arm CPUs.

Taiwan’s new and fastest supercomputer, Taiwania 2 is also the world’s 20th fastest and 10th most energy efficient. Made in Taiwan, it will be used by the academic and research communities at the Taiwan Computing Cloud.

Hewlett-Packard Enterprise (HPE) is on the acquisition trail again with the purchase of supercomputing pioneer Cray for US$1.3 billion.

Hewlett-Packard Enterprise (HPE) is on the acquisition trail again with the purchase of supercomputing pioneer Cray for US$1.3 billion.

The race to have the world’s fastest supercomputer has taken on a new spin with the US Department of Energy commissioning Cray to build the most powerful yet by 2021. Called Frontier, the new 1.5-exaflop supercomputer will be built on built on Cray’s Shasta supercomputing platform using AMD EPYC CPUs and Radeon Instinct GPUs.

The race to have the world’s fastest supercomputer has taken on a new spin with the US Department of Energy commissioning Cray to build the most powerful yet by 2021. Called Frontier, the new 1.5-exaflop supercomputer will be built on built on Cray’s Shasta supercomputing platform using AMD EPYC CPUs and Radeon Instinct GPUs.

NVIDIA has reached a US$6.9 billion agreement to acquire Mellanox, a supplier of end-to-end Ethernet and smart interconnect solutions and services for servers and storage.

NVIDIA has reached a US$6.9 billion agreement to acquire Mellanox, a supplier of end-to-end Ethernet and smart interconnect solutions and services for servers and storage.

It’s no dinosaur but the newly-announced NVIDIA Titan RTX, dubbed T-Rex, is certainly very powerful — to the tune of 130 teraflops of deep learning performance and 11 GigaRays of ray-tracing performance.

A six-person team from Nanyang Technological University (NTU) has achieved 56.51 teraflops, the highest Linpack score — a measurement of a system’s floating point computing horsepower — in a global supercomputing competition held in conjunction with SC18 in Dallas.

The world’s top two supercomputers — – the US Department of Energy’s Summit, at Oak Ridge National Laboratory, and Sierra, at Lawrence Livermore National Lab. — pack a combined total of more than 40,000 NVIDIA V100 Tensor Core GPUs, according to the latest of the Top 500 list released in conjunction with SC18.

Taiwan’s Chinese Medical University Hospital (CMUH) has become the first healthcare provider in Asia to deploy and operate the NVIDIA DGX-2 AI supercomputer.

Taiwan’s Chinese Medical University Hospital (CMUH) has become the first healthcare provider in Asia to deploy and operate the NVIDIA DGX-2 AI supercomputer.

The first NVIDIA AI Conference in Sydney on September 4 will kick off with two keynote addresses. Marc Hamilton, Vice President of Solutions Architecture and Engineering, NVIDIA, will talk about Transforming Industries With AI. Jason Humphrey (right), Head of Retail Risk, ANZ Bank, will then share on Creating the Infrastructure to Undertake Deep Learning.

The first NVIDIA AI Conference in Sydney on September 4 will kick off with two keynote addresses. Marc Hamilton, Vice President of Solutions Architecture and Engineering, NVIDIA, will talk about Transforming Industries With AI. Jason Humphrey (right), Head of Retail Risk, ANZ Bank, will then share on Creating the Infrastructure to Undertake Deep Learning.

NetApp has introduced NetApp ONTAP AI proven architecture to simplify, accelerate and scale the data pipeline across edge, core and cloud for deep learning deployments and to help customers achieve real business impact with AI.

NetApp has introduced NetApp ONTAP AI proven architecture to simplify, accelerate and scale the data pipeline across edge, core and cloud for deep learning deployments and to help customers achieve real business impact with AI.