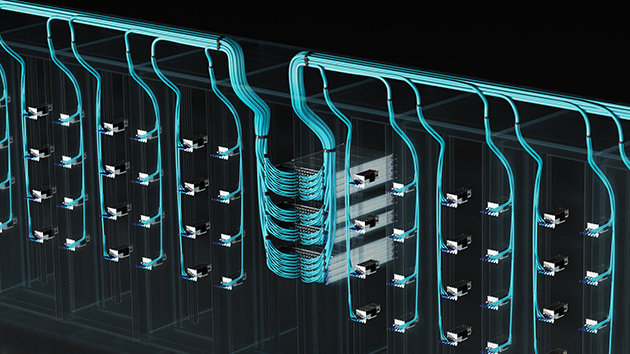

NVIDIA plans to manufacture its AI supercomputers entirely within the United States for the first time, marking a significant shift in the global technology supply chain. In partnership with leading manufacturers such as TSMC, Foxconn, […]

NVIDIA plans to manufacture its AI supercomputers entirely within the United States for the first time, marking a significant shift in the global technology supply chain. In partnership with leading manufacturers such as TSMC, Foxconn, […]

NVIDIA’s Blackwell platform has taken pole position in the latest MLPerf Inference V5.0 benchmarks, setting a new standard in artificial intelligence (AI) inference performance. These results highlight the platform’s ability to tackle some of the […]

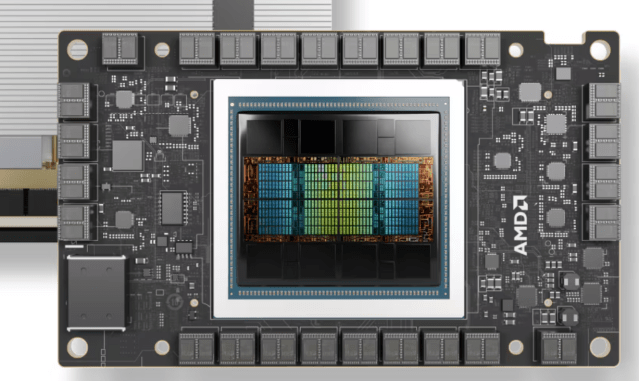

Rapt AI is collaborating with AMD to enhance AI workload management and inference performance. This partners will integrate Rapt’s intelligent workload automation platform with AMD’s Instinct MI300X, MI325X, and upcoming MI350 series GPUs, to provide […]

NVIDIA has released a massive, open-source dataset designed to accelerate the development of physical AI in robotics and autonomous vehicles (AVs). Unveiled at GTC in San Jose, the dataset aims to provide researchers and developers […]

NVIDIA plans to build the NVIDIA Accelerated Quantum Research Center (NVAQC) in Boston to advance quantum computing by integrating leading quantum hardware with AI supercomputers. NVAQC will enable accelerated quantum supercomputing, tackling some of quantum […]

ST Telemedia Global Data Centres (STT GDC) has become an NVIDIA colocation partner, after two of its data centre facilities in Southeast Asia (SEA) – STT Singapore 6, and STT Bangkok 1 – received NVIDIA […]

NVIDIA’s GTC this year will focus on the future of AI and quantum computing. To be held in San Jose from March 17 to 21, the AI conference is expected to bring together 25,000 in-person […]

Indosat Ooredoo Hutchison (IOH) is collaborating with Nokia and NVIDIA to deploy Indonesia’s first artificial intelligence radio access network (AI-RAN), which is expected to significantly improve the 5G experience for millions of Indonesians. The first […]

ResetData has launched Australia’s first sovereign public AI-Factory (AI-F1) and an AI Marketplace. Targeted to commence operations in Q2, this facility in Melbourne’s Central Business District will provide access to advanced AI capabilities for businesses […]

NVIDIA has rolled out Project Digits, a personal AI supercomputer that brings unprecedented computing power to the desks of researchers, data scientists, and students worldwide. Powering Project Digits is the NVIDIA GB10 Grace Blackwell Superchip […]

At his opening keynote at CES 2025, NVIDIA Founder and CEO Jensen Huang (above) revealed the much-anticipated GeForce RTX 50 series GPUs. Powered by the Blackwell architecture, this new generation of graphics cards comes packed […]

Schneider Electric has announced end-to-end AI-ready data centre solutions to tackle the pressing energy and sustainability challenges posed by the increasing demand for AI systems. Its latest innovations include a new data centre reference design […]

NVIDIA Founder and CEO Jensen Huang (above) has deepened his commitment to making Vietnam NVIDIA’s “second home” by setting up the company’s first research and development centre in the country. NVIDIA has teamed up with […]

A team of researchers has developed a generative AI model called Fugatto that is set to transform the audio landscape, offering users unparalleled control over sound creation and manipulation. Fugatto is a versatile tool that […]

Pure Storage has unveiled the Pure Storage GenAI Pod to make AI more accessible and manageable for businesses across various industries. GenAI Pod is designed to address the complexities that enterprises face when implementing GenAI […]

NVIDIA and Google Quantum AI are pushing the boundaries of quantum computing by leveraging the NVIDIA CUDA-Q platform to accelerate the design of next-generation quantum devices. Google Quantum AI is harnessing the power of NVIDIA’s […]

Indonesia has unveiled its Sahabat-AI sovereign AI initiative that aims to create open-source large language models (LLMs) tailored specifically for Indonesia’s 277 million speakers. The initiative was launched at Indonesia AI Day, showcasing the country’s […]

At NVIDIA AI Summit Japan, NVIDIA and SoftBank have announced a series of initiatives to make Japan a global artificial intelligence (AI) powerhouse. SoftBank is building Japan’s most powerful AI supercomputer using NVIDIA’s Blackwell platform. […]

Singapore’s National Supercomputing Centre (NSCC) has launched the Aspire 2A+ supercomputer to advance artificial intelligence (AI) research in Singapore. Powered by NVIDIA’s DGX SuperPOD technology, the system boosts Singapore’s computational arsenal as well as represents […]

India’s cloud infrastructure providers and server makers are set to increase their NVIDIA GPU deployment nearly tenfold by the end of 2024, compared to 18 months ago. This expansion will introduce tens of thousands of […]

Lenovo and NVIDIA have introduced Lenovo Hybrid AI Advantage with NVIDIA which aims to transform the landscape of enterprise computing and accelerate AI adoption across industries. Designed to address the growing demand for proven AI […]

The year was 1999. Y2K was top of mind. Napster was on the rise. And a new technology was launched that would still be revolutionising the world 25 years on. While the NVIDIA GeForce 256 […]

Foxconn and NVIDIA have joined forces to build the island’s fastest and most powerful supercomputer, positioning Taiwan as a formidable contender in the global AI race. Revealed at Hon Hai Tech Day, the Hon Hai […]

Vast Data has introduced the Vast InsightEngine with NVIDIA solution that aims to transform how enterprises handle and analyse their data. Scheduled for release in early 2025, this platform will empower enterprises to extract valuable […]

NetApp has unveiled a new generative AI (GenAI) data vision and end-to-end integrated solution that will transform how businesses leverage their data for AI applications. The solution combines NVIDIA’s AI software and accelerated computing with […]

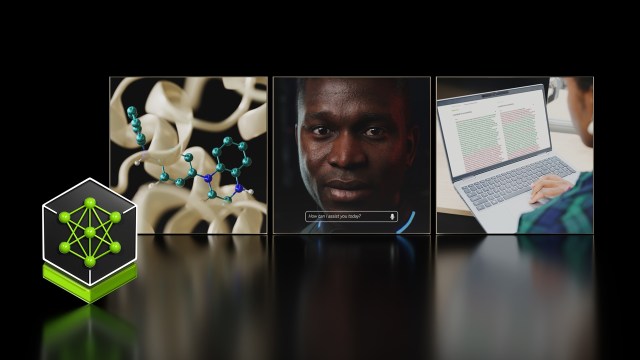

NVIDIA has launched the NIM Agent Blueprints catalogue of pre-trained, customisable workflows to help businesses develop their own custom AI agents. Built on NVIDIA’s NeMo framework, NIM Agent Blueprints provides a suite of software for […]

By KL Lim Computex 2024 hosted 85,179 ICT buyers and professionals in Taipei last week. And one person stood out head and shoulder above everyone else — NVIDIA CEO Jensen Huang. He was like the […]

By Edward Lim Singapore has unveiled the Digital Enterprise Blueprint (DEB) to accelerate digital transformation and empower enterprises by leveraging emerging technologies such as artificial intelligence (AI). DEB aims to establish Singapore as a nation […]

Databricks is working with NVIDIA to optimise data and AI workloads on Databricks’ Data Intelligence Platform. Organisations are using the platform to build and customise generative AI (GenAI) solutions trained on their data and tailored […]

HP is collaborating with NVIDIA to develop advanced AI training modules for its Amplify Partner Program. More modules will be released in the future to keep HP Amplify partners ahead in the rapidly evolving AI […]

NVIDIA’s GPU Technology Conference (GTC) will be returning as a physical show after a four-year haitus due to the pandemic. Started in 2009, the annual global conference for AI developers, engineers, researchers, inventors, and IT […]

Cisco and NVIDIA have teamed up to provide enterprises with easily deployable and manageable AI infrastructure. The collaboration leverages Cisco’s expertise in Ethernet networking and its extensive partner ecosystem, and NVIDIA’s GPU technology that is […]

Equinix has introduced a fully managed private cloud service that lets enterprises seamlessly acquire and oversee their own NVIDIA DGX AI supercomputing infrastructure for the development and operation of bespoke generative AI (GenAI) models. Available […]

Amazon Web Services (AWS) and NVIDIA have expanded their partnership to provide cutting-edge infrastructure, software and services to drive advancements in generative AI for their customers. This enhanced collaboration combines NVIDIA’s latest multi-node systems, including […]

Dropbox is partnering NVIDIA to bring enhanced AI capabilities to Dropbox users. This collaboration aims to elevate productivity and streamline workflows for millions by tapping into the potential of generative AI. Dropbox plans to expand […]

NVIDIA has introduced an AI foundry service aimed at accelerating the development and fine-tuning of generative AI applications utilising Microsoft Azure. Aimed at both startups and enterprises, the service consolidates three key components: an assemblage […]

At Hon Hai Tech Day in Taipei, NVIDIA Founder and CEO Jensen Huang (left) and Foxconn Chairman and CEO Young Liu announced a collaboration to accelerate the AI industrial revolution. This involves Hon Hai Technology […]

Google Cloud and NVIDIA have teamed up on new AI infrastructure and software for enterprises to build and deploy massive models for generative AI and speed data science workloads. The joint effort provides end-to-end machine […]

Pixar, Adobe, Apple, Autodesk, and NVIDIA, together with the Joint Development Foundation (JDF), have formed the Alliance for OpenUSD (AOUSD) to promote the standardisation, development, evolution, and growth of Pixar’s Universal Scene Description (USD) technology. […]

NVIDIA and Accenture have launched the AI Lighthouse programme designed to fast-track the development and adoption of enterprise generative AI capabilities. Expanding on existing strategic partnerships among ServiceNow, NVIDIA, and Accenture, AI Lighthouse will assist […]

By K L Lim The advent of generative AI has brought coding to the masses. At the opening keynote address of Computex, NVIDIA Founder and CEO Jensen Huang pointed out that everyone can be a […]

NVIDIA, Rolls-Royce and Classiq have achieved a quantum computing breakthrough aimed at improving the efficiency of jet engines. Using NVIDIA’s quantum computing platform, the companies have designed and simulated the world’s largest quantum computing circuit […]

ServiceNow and NVIDIA are teaming up to bring generative AI to enterprises. They are developing powerful, enterprise-grade generative AI capabilities that can transform business processes with faster, more intelligent workflow automation. ServiceNow is using NVIDIA […]

Singtel is bringing computing to the edge for enterprises. It is rolling out the Azure public multi-access edge compute (MEC) available for all enterprises. This unlocks opportunities to experience the advantages of edge computing and […]

As GTC, NVIDIA announced the NVIDIA DGX Quantum, the world’s first GPU-accelerated quantum computing system. Built with Quantum Machines, the system combines two top platforms — the NVIDIA Grace Hopper Superchip and CUDA Quantum open-source […]

AI, metaverse and computer vision are technologies placing new demands on telcos. Telcos are seeking industry-standard solutions that can run such applications and immersive graphics workloads on the same server. NVIDIA is developing a new […]

ChatGPT has taken the world by storm, creating a buzz unseen for a long time. Powered by AI, the tool has gotten many excited at its ability to generate contents and answer questions on the […]

Waiting in a parked car will be a lot more fun with GeForce NOW cloud gaming service to be available in cars from Hyundai Motor Group, BYD and Polestar. The new GeForce NOW offering will […]

NVIDIA has joined hands with Hon Hai Technology Group (also known as Foxconn) to develop automated and autonomous vehicle platforms. Under the partnership, Foxconn will produce electronic control units (ECUs) based on NVIDIA Drive Orin […]

Deutsche Bank is partnering with NVIDIA to accelerate the use of artificial intelligence (AI) and machine learning (ML) in the financial services sector. The partnership will support Deutsche Bank’s cloud transformation journey, including using AI […]

Taiwan’s National Tsing Hua University NTHU-CM team has emerged as champion and won the best big data analytics performance at the APAC HPC-AI student competition. Southern University of Science and Technology and National Tsing Hua […]

NVIDIA and Microsoft have teamed up to build one of the most powerful AI supercomputers in the world, powered by Microsoft Azure’s advanced supercomputing infrastructure combined with NVIDIA GPUs, networking and full stack of AI […]

NVIDIA has released the developer preview 2 (DP 2) version of its Isaac ROS software that features new cloud– and edge-to-robot task management and monitoring software for autonomous mobile robot (AMR) fleets. The latest robot […]

Meta has introduced its Grand Teton next-generation AI platform that packs in more memory, network bandwidth and compute capacity than the previous generation Zion platform. Grand Teton sports twice the network bandwidth and four times […]

NVIDIA and Oracle are partnering to speed up AI adoption by enterprises across industires. The collaboration will bring together the full NVIDIA accelerated computing stack and Oracle Cloud Infrastructure (OCI). OCI is expanding its capacity […]

NVIDIA and Booz Allen Hamilton are collaborating to bring an AI-enabled, GPU-accelerated cybersecurity platform to the public and private sectors. Powered by NVIDIA GPUs and NVIDIA Morpheus, the platform enables next-generation incident response systems that […]

By Edward Lim At the next virtual GPU Technology Conference (GTC) from September 19 to 22, NVIDIA Founder and CEO Jensen Huang will announce AI and metaverse technologies in a news-packed keynote. His keynote will […]

Siemens and NVIDIA are teaming up to enable the industrial metaverse and increase use of AI-driven digital twin technology to help bring industrial automation to a new level. First up is a plan to connect […]

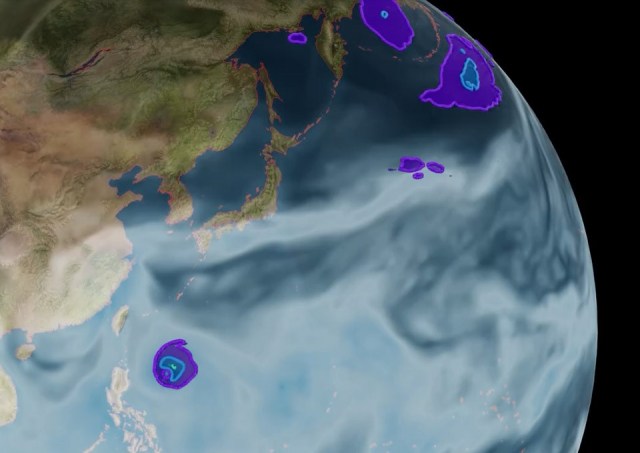

Floods, wildfires and other climate-related disasters have major impact on countries around the world, including several in the Asia-Pacific region. With a little help from AI, the United Nations Satellite Centre (Unosat) hopes to improve […]

Dell Technologies, F5, Intel, Keysight Technologies, Marvell, NVIDIA and Red Hat are among a growing number of tech companies that are co-founders of The Linux Foundation’s Open Programmable Infrastructure (OPI) Project. OPI aims to foster […]