Amid the growing interest in generative AI comes the concern for how it can be used to generate responses for nefarious purposes. To mitigate this, NVIDIA has developed NeMo Guardrails, an open-source software that can help developers guide generative AI applications to create impressive text responses that stay on track.

NeMo Guardrails will help ensure smart applications powered by large language models (LLMs) are accurate, appropriate, on topic, and secure. The software includes all the code, examples and documentation businesses need to add safety to AI apps that generate text.

Designed to work with all LLMs, such as OpenAI’s ChatGPT, the software lets developers align LLM-powered apps to be safe and to stay within the domains of a company’s expertise.

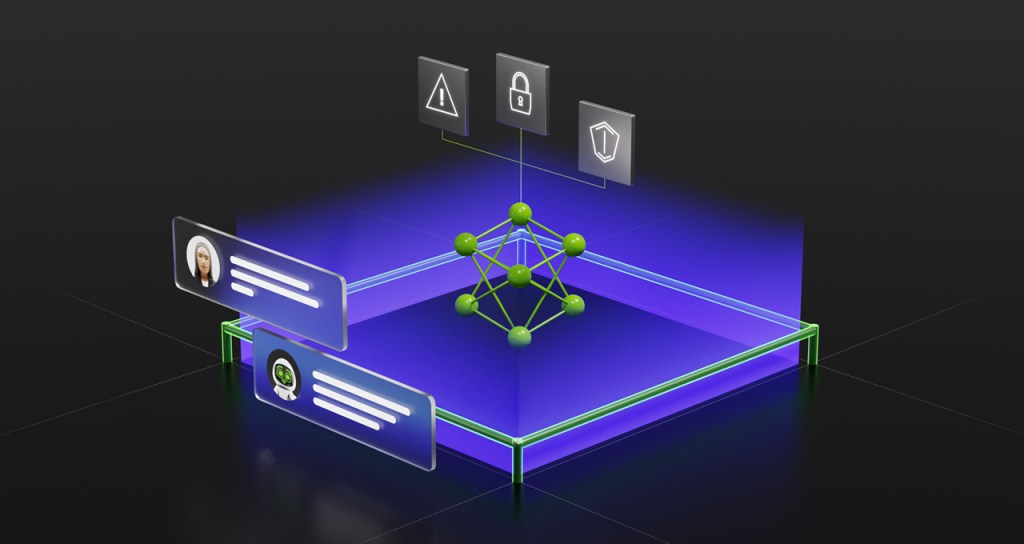

It lets developers set three kinds of boundaries:

- Topical guardrails prevent apps from veering off into undesired areas. For example, they keep customer service assistants from answering questions about the weather.

- Safety guardrails ensure apps respond with accurate, appropriate information. They can filter out unwanted language and enforce that references are made only to credible sources.

- Security guardrails restrict apps to making connections only to external third-party applications known to be safe.

Developers can create new rules quickly with a few lines of code. This is indeed a much needed tool to safeguard the use of generative AI apps.

NVIDIA is incorporating NeMo Guardrails into the NVIDIA NeMo framework, which includes everything users need to train and tune language models using a company’s proprietary data.

Much of the NeMo framework is already available as open source code on GitHub. Enterprises also can get it as a complete and supported package, part of the NVIDIA AI Enterprise software platform.

NVIDIA made NeMo Guardrails open source to contribute to the developer community’s tremendous energy and work on AI safety.