Amazon Web Services (AWS) has announced the general availability of Amazon Elastic Compute Cloud (Amazon EC2) P4d instances, the next generation of GPU-powered instances.

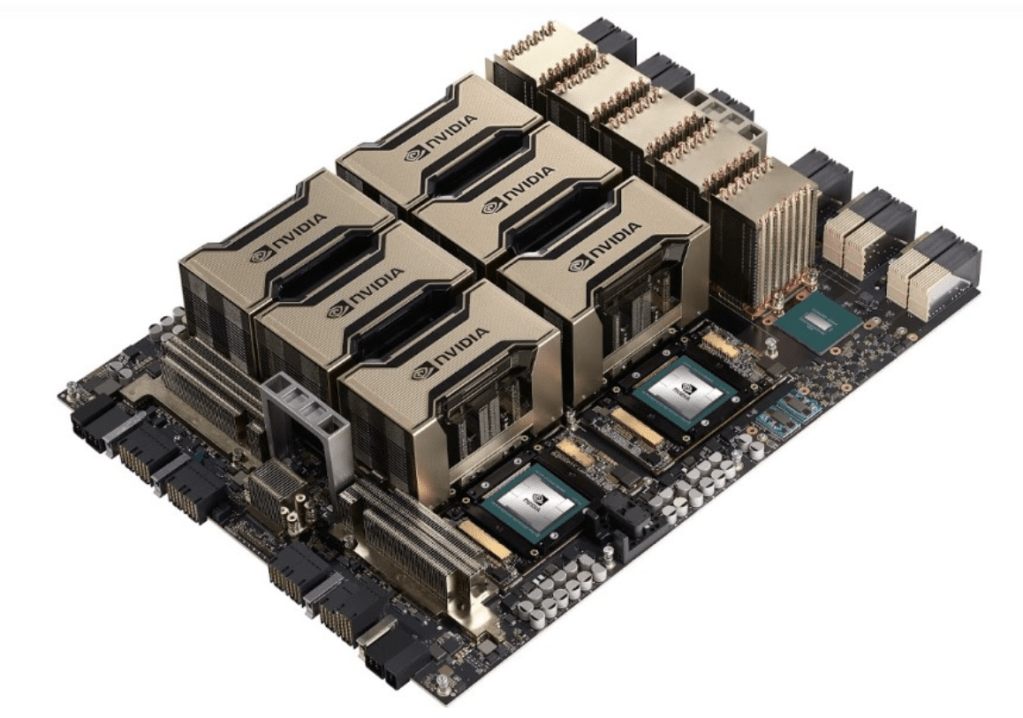

Featuring feature eight NVIDIA A100 Tensor Core GPUs and 400 Gbps of network bandwidth, the new instances deliver 3x faster performance, up to 60 percent lower cost, and 2.5x more GPU memory for machine learning training and high-performance computing (HPC) workloads when compared to previous generation P3 instances.

Using P4d instances with AWS’s Elastic Fabric Adapter (EFA) and NVIDIA GPUDirect RDMA (remote direct memory access), customers are able to create P4d instances with EC2 UltraClusters capability.

Users can scale P4d instances to more than 4,000 A100 GPUs by making use of AWS-designed non-blocking petabit-scale networking infrastructure integrated with Amazon FSx for Lustre high performance storage, offering on-demand access to supercomputing-class performance to accelerate machine learning training and HPC.

“The pace at which our customers have used AWS services to build, train, and deploy machine learning applications has been extraordinary. Now, with EC2 UltraClusters of P4d instances powered by NVIDIA’s latest A100 GPUs and petabit-scale networking, we’re making supercomputing-class performance available to virtually everyone,” said Dave Brown, Vice President of EC2 at AWS.

P4d instances are now available in US and will be available in more regions soon.

“The first decade of GPU cloud computing has brought over 100 exaflops of AI compute to the market. With the arrival of the Amazon EC2 P4d instance powered by NVIDIA A100 GPUs, the next decade of GPU cloud computing is off to a great start,” wrote Ian Buck, General Manager and Vice President of Accelerated Computing at NVIDIA.