Synthesised speech is nothing new — think virtual assistants for smartphones and smart speakers, and GPS assistance. They may sound robotic and monotonous, even when there are choices of voice or accent. All these is about to change as NVIDIA researchers are using AI to give life to synthesised speech.

The researchers are building models and tools for high-quality, controllable speech synthesis that capture the richness of human speech, filled with intonation, timbre and feelings.

Their models are on demo at Interspeech 2021, which runs through September 3 at Brno Exhibition Centre in Czech Republic.

These models can help voice automated customer service lines for banks and retailers, bring video-game or book characters to life, and provide real-time speech synthesis for digital avatars.

Expressive speech synthesis is just one element of NVIDIA Research’s work in conversational AI, which encompasses natural language processing, automated speech recognition, keyword detection, and audio enhancement.

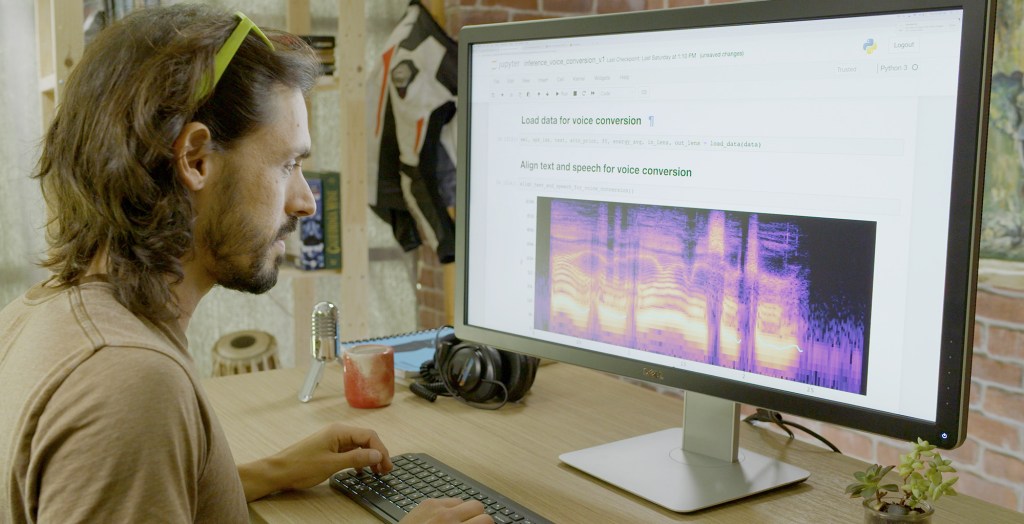

An interesting feature created by NVIDIA researchers is voice conversion, where one speaker’s words (or even singing) is delivered in another speaker’s voice. Inspired by the idea of the human voice as a musical instrument, the RAD-TTS interface gives users fine-grained, frame-level control over the synthesised voice’s pitch, duration and energy.

With NVIDIA NeMo — an open-source Python toolkit for GPU-accelerated conversational AI — researchers, developers and creators can gain a head start in experimenting with, and fine-tuning, speech models for their own applications.